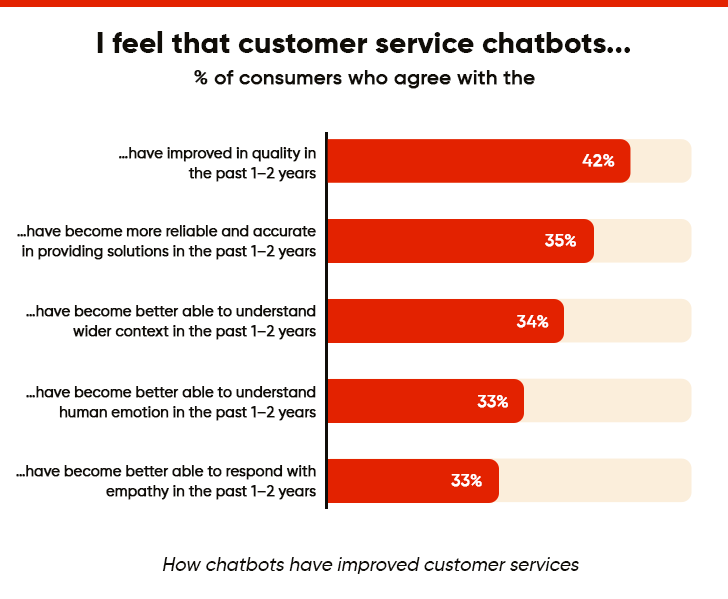

The way businesses deliver customer care and services has changed a lot. Almost two years ago, chatbots were little more than sophisticated FAQ engines that could easily handle queries and grievances. This was done at a speed and scale never experienced before. Then came AI!

There came a shift from transactional automation to genuine conversation intelligence. The conversational AI chatbots are way different. These systems reason, adapt, and understand context. And businesses pulling ahead are building chatbots with intelligent interaction layers that span every customer touchpoint. So it is not surprising that well-known chatbots handle 1.5 billion requests per day.

|

“Customer service leaders are determined to use AI to reduce costs, but return on those investments is far from guaranteed.” – Patrick Quinlan, Senior Director Analyst, Gartner Customer Service and Support Practice |

What Is the Anatomy of Conversational AI Chatbots?

Conversational AI chatbots are software applications that simulate human-like interactions through text or voice interfaces. Modern versions easily interpret user intent, including nuance and sarcasm, and respond naturally.

The system maintains context across sessions, handles ambiguity, and adjusts its tone based on how the conversation is unfolding. This way, they differ from traditional chatbots that use rigid scripts. What this means in practice is that simple question-answer exchanges become meaningful dialogues that feel human.

On that note, let’s explore the different types of chatbots, each with real strengths and limitations.

| Capability | Rule-Based | ML-Powered | Conversational AI Chatbot |

|---|---|---|---|

|

Handles ambiguity |

No |

Partial |

Yes |

|

Context retention |

No |

Limited |

Multi-turn, persistent |

|

Learns from interactions |

No |

Yes |

Yes (continuously) |

|

Emotion/tone awareness |

No |

No |

Yes |

|

Best for |

FAQs, structured flows |

Pattern recognition |

Complex, open-ended queries |

|

Integration complexity |

Low |

Medium |

High |

|

Enterprise readiness |

Limited |

Moderate |

High |

Companies can choose between these categories based on their specific needs, available resources, and intended uses. Simple tasks work well with rule-based chatbots, while complex scenarios need sophisticated AI chatbots.

Explore How an Asset Management Firm Improved UX with Gen AI Chatbot

How Are Conversational AI Chatbots Reshaping Industries?

AI-powered chatbots are making a measurable impact across industries. These digital assistants solve numerous business challenges and deliver a strong return on investment.

I. Customer Service and Support

Customer service remains the primary domain for chatbots. Routine questions are handled efficiently, consistently, and without queue bottlenecks. The response time gap alone is telling: conversational chatbots reduce average wait times by 22 seconds.

What most teams overlook is the self-service resolution rate. A well-deployed conversational AI bot closes tickets without any human involvement.

Key advantages organizations gain:

- 24/7 availability across time zones

- Consistent responses to common questions

- Smooth handoff to human agents when needed

- Multiple language support without extra staff costs

II. Automation of Business Processes

AI conversation bots excel at streamlining internal operations. They handle everything from data entry to compliance documentation. This frees employees from doing repetitive work.

Finance teams use chatbots to process expense reports and verify documentation, cutting processing time considerably. HR departments deploy them to guide new hires through paperwork and policy questions without consuming hours of human attention.

III. Seamless Appointment Scheduling

AI chatbots have reshaped how appointment-based businesses work. Fewer no-shows. Fewer bookings. Less administrative drag.

Rather than relying on manual coordination, professional services firms now let AI chatbots handle client scheduling, checking availability, meeting length requirements, and factoring in preferences, and presenting the best options automatically.

IV. Virtual Assistants for Employee Productivity

AI chatbots also serve as personal productivity assistants. They help staff find resources, retrieve documents, and access information. No need to dig through complex databases or interrupt colleagues.

In large organizations, these assistants cut the time spent searching for information. They also improve knowledge sharing. Employees can ask questions in plain language instead of using rigid search terms.

Remote teams also benefit from AI conversation bots that keep information access consistent. This ensures off-site employees get the same support as those in the office.

V. Conversational Commerce

This is where conversational AI shifts from cost center to revenue driver. Conversational commerce refers to using AI conversation bots within the buying journey itself, not just post-purchase support.

Consider product recommendation engines embedded in chat, guided selling flows on ecommerce platforms, or instant quote generation via messaging apps. The use cases are expanding faster than most teams realize.

Omnichannel deployment is what makes this viable at scale. A conversational AI chatbot deployed across WhatsApp, web chat, in-app messaging, and voice creates consistent buying experiences regardless of where the customer starts. For most leading brands, this is already running in production.

Elevate CX Without Letting the Operational Costs Spiral Up

What is the Technology Behind Modern Conversational AI Chatbots?

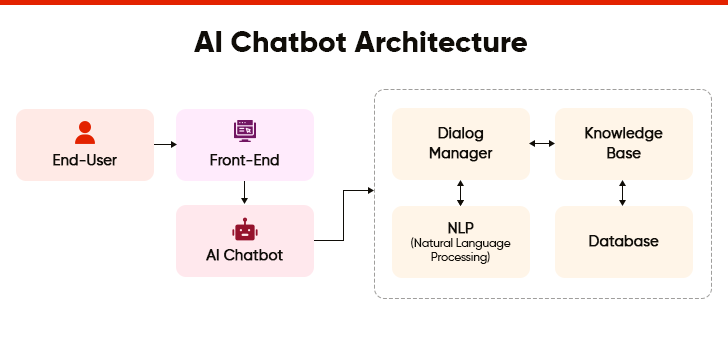

AI chatbot development relies on several connected component working in concert. Together, they create a system that doesn’t just process language, it understands it.

1. User Interface (UI): Bridging Human-Machine Interaction

The UI is what users actually experience. It’s the surface layer, but its design directly influences adoption rates and conversation completion. This visual layer includes all the interactive elements, including e.g., message bubbles, input fields, buttons, and avatars that make communication possible. A well-designed conversational AI chatbot platform typically includes:

- Clear welcome messages

- Quick-reply to buttons that reduce typing effort

- Chat history access for reference

- Feedback mechanisms to improve responses

The way an interface looks shapes how users feel about it and affects how much they use it. Clean, accessible designs with appropriate color choices make more users adopt the technology.

2. Natural Language Processing (NLP): The Brain That Understands Human Language

NLP forms the foundation of smart chatbot conversationsIt converts raw text into structured, machine-usable meaning through several steps:

- Tokenization: Breaking text into words

- Intent Recognition: Figuring out what users want

- Entity Extraction: Pulling out key information like dates or locations

Natural Language Understanding (NLU) handles sentence structure, semantics, and context. Where NLU decodes meaning, Natural Language Generation (NLG) does the inverse. It converts the chatbot’s intended response into language that sounds natural.

3. Dialog Manager: Enabling Contextual Conversations

The dialog manager controls how conversations flow and keeps track of context. Unlike basic systems that just answer questions, this component remembers previous messages and creates coherent conversations.

It picks the right responses based on what’s happening now, what the user wants, and where the conversation stands at any point in time. The dialog manager also decides when to ask for clarity, give information, or let a human take over.

4. Machine Learning Models: Continuous Learning for Smarter Interactions

AI bots get better through machine learning algorithms that study conversation patterns. These models learn from past conversations and user feedback.

With each conversation, the models spot what works and what doesn’t. This learning process helps chatbots handle increasingly complex questions over time. No need to reprogram them. The more interactions they process, the more valuable they become.

|

“Much of what we do with machine learning happens beneath the surface. Machine learning drives our algorithms for demand forecasting, product search ranking, product & deal recommendations, and much more…quietly but meaningfully improving our core operations.” – Jeff Bezos, Founder & Executive Chairman, Amazon |

5. Large Language Models: The Intelligence Shift

This is the development that separates modern conversational AI bots from everything that came before. LLMs, such as GPT-4, Gemini, Claude, among others, have raised the ceiling on what chatbot responses can look like.

Rather than matching inputs against a static response library, they generate contextually coherent, nuanced replies that adapt to conversation history in real time.

Two concepts matter most here:

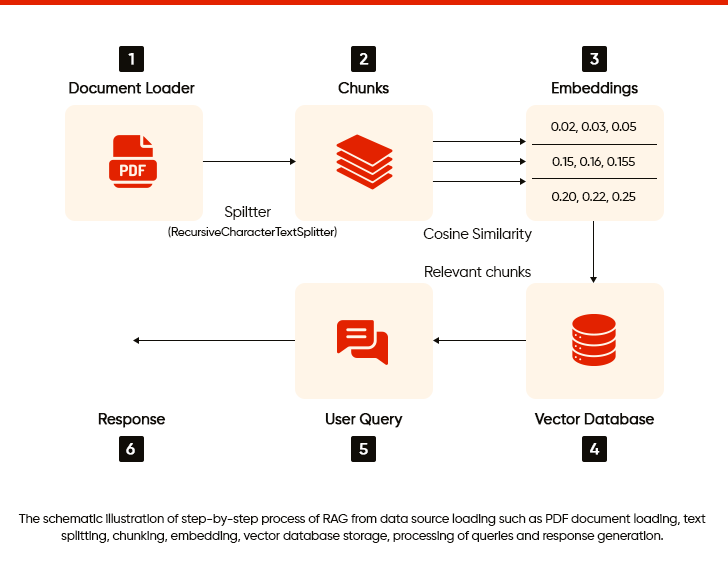

- Retrieval-Augmented Generation (RAG) connects the LLM to your proprietary knowledge base, grounding responses in accurate, company-specific information rather than general training data. This directly addresses the hallucination risk that comes with pure LLM deployment.

- Prompt Engineering is the discipline of structuring inputs to the LLM to produce consistent, on-brand, and reliable outputs. In enterprise deployments, this is where a huge share of the fine-tuning effort lives and where under-investment shows up quickly in response quality.

Beyond text, voice, and multimodal capabilities now sit on top of this LLM foundation. A conversational AI chatbot platform built on modern LLMs can handle voice input, image interpretation, and document processing, all within a single conversation thread.

Healthcare Company Achieves an 11% Boost in Nps Scores With AI Chatbot

What Are Conversational Chatbot Implementation Challenges and How to Solve Them?

Conversational chatbots bring substantial benefits. However, getting them right is rarely straightforward.

| Challenge | Business Impact | Mitigation Strategy |

|---|---|---|

|

Data Security and Privacy |

High |

Role-based access controls, data masking, end-to-end encryption, third-party security audits |

|

Hallucination and Response Accuracy |

High |

RAG architecture, confidence thresholds, ongoing response auditing, human review loops |

|

Conversation Design Quality |

Medium |

Dedicated dialog design sprints, edge case mapping, iterative testing with real user data |

|

Responsible AI and Explainability |

Medium |

XAI-capable platform selection, audit trail logging, uncertainty flagging in regulated contexts |

|

Legacy System Integration |

Medium |

API-first architecture, middleware layer, phased integration roadmap |

I. Data Security Concerns

AI chatbots handle sensitive information routinely. This exposes organizations to data breaches and cyberattacks.

What makes this particularly difficult is the decentralized nature of chatbot deployment. Traditional systems assume centralized control, but chatbot vulnerabilities don’t follow that pattern.

Many times, employees share sensitive information unknowingly when using AI tools. That is why companies like JPMorgan Chase, Verizon, and Amazon have restricted or completely banned their staff from using certain AI platforms.

II. Hallucination Risks and Response Accuracy

This is the challenge enterprise teams most consistently underestimate. LLM-powered conversational AI bots can generate confident, fluent, and factually wrong responses. In customer-facing contexts, a single credible-sounding error can erode trust that took months to build.

RAG architectures reduce this risk by grounding responses in verified knowledge, but they don’t eliminate it. Ongoing response auditing and confidence-threshold guardrails are operational requirements, not configuration afterthoughts.

III. Conversation Design Challenges

Building a functional bot is the easy part. Designing one that feels natural, recovers gracefully from edge cases, and guides users without friction is one that requires conversation design expertise most organizations haven’t yet developed.

Poor dialog design is the leading cause of low self-service resolution rates. It’s also, in most cases, the most fixable problem, given the right investment.

IV. Responsible AI and Explainability

Explainable AI is no longer a compliance checkbox. It’s a trust mechanism. Enterprise buyers and regulators increasingly expect AI systems to demonstrate how they reach conclusions. This is especially acute in regulated industries.

Conversational AI bots operating in financial services or healthcare must surface reasoning, flag uncertainty, and log decisions for audit. At this point, selecting a conversational AI chatbot platform with built-in XAI capabilities is a baseline expectation, not a point of differentiation.

Though these challenges exist, they can be addressed with proper planning and implementation. Effective planning minimizes these challenges while still providing the benefits chatbots offer. Teams should acknowledge these hurdles upfront, instead of discovering them halfway through AI chatbot development.

Which is the Right Path: Build, Buy, or Configure?

One of the most consequential decisions in any conversational AI initiative is rarely discussed in vendor literature: should your organization build a custom solution, procure a platform, or configure an off-the-shelf tool? Each approach carries distinct trade-offs in cost, speed, control, and long-term flexibility.

The right answer depends on the specificity of your workflows, the maturity of your internal engineering team, and the timeline you are working to.

Decision matrix across the three primary deployment approaches:

| Approach | Best Suited for | Time to Deploy | Cost Profile | Flexibility | Engineering Requirement |

|---|---|---|---|---|---|

|

Build Custom |

Highly unique workflows, proprietary data, full IP ownership |

6–18 months |

High |

Maximum |

Dedicated AI/ML team |

|

Buy a Platform |

Standard enterprise use cases, proven integrations, speed to market |

10–16 weeks |

Medium |

Moderate |

Implementation + configuration team |

|

Configure (Low-Code) |

SMBs, simple flows, low query complexity |

2–6 weeks |

Low |

Limited |

Minimal; citizen |

How to Make the Call?

Organizations with highly regulated workflows, sensitive proprietary data, or deeply bespoke customer journeys will typically find that platform or custom-build approaches offer the necessary control and compliance posture.

Those seeking rapid deployment for well-defined use cases, such as IT helpdesk, HR self-service, and appointment scheduling, will realize faster ROI through a platform or low-code configuration path.

It is also worth noting that these approaches are not mutually exclusive. Many enterprises begin with a platform deployment, validate the use case, and subsequently invest in custom-built components where differentiation matters most. Starting with the highest-friction use case and working backward remains the most reliable sequencing strategy.

How AI Can Help Businesses Take CX to the Next Level

What Trends Are Defining the Next Generation of Conversational AI Chatbot Development?

AI chatbot development has entered a phase where businesses aim to create more human-like and transparent experiences. Modern virtual assistants are moving beyond simple text conversations to match human communication patterns.

I. Multimodal Conversational AI

The next generation of conversational AI is not limited to text. Multimodal capabilities, including voice, image interpretation, document processing, and video, are now being integrated into unified conversation threads on leading enterprise platforms.

In practice, this shift implies that a customer can photograph a damaged product and initiate a return within the same chat session. Or a field technician can share an image of a faulty component and receive diagnostic guidance instantly.

For organizations evaluating platforms, multimodal readiness is an architectural prerequisite for long-term investment protection.

II. Enhanced NLP

Sentiment analysis helps chatbots understand and interpret human emotions during conversations. This capability has matured significantly. Advanced bots have moved well beyond simple positive/negative classification. They now detect frustration, hesitation, and urgency, adjusting their responses accordingly. The interactions that result feel less transactional and more like actual dialogue.

III. Agentic Conversational AI

This is the most consequential architectural shift happening in conversational AI right now. An agentic conversational AI bot can receive a high-level instruction, decompose it into subtasks, trigger the relevant APIs, execute each step, and confirm completion. All this is done within a single conversation thread, without a human in the loop.

Ready to Leverage Conversational AI Chatbot?

Conversational AI chatbots have moved well past their origins as automated FAQ responders. What’s taking shape now is something more consequential: an intelligent interaction layer connecting customers, employees, and systems in real time across modalities, channels, and use cases.

The organizations that win won’t be those who deployed first; they’ll be those who deployed deliberately. With the right architecture, meaningful measurement, and a genuine commitment to iteration, a conversational AI chatbot platform becomes one of the highest-ROI investments in the enterprise technology stack.

If your organization is evaluating or scaling conversational AI, start with the use case that creates the most friction today and work backward from there.

Frequently Asked Questions

A regular chatbot follows scripts and keyword triggers. It breaks the moment users go off-script. A conversational AI chatbot understands intent, holds context across turns, and handles ambiguous or multi-part queries without losing the thread. In enterprise settings, the performance gap between the two is significant, especially for complex service interactions.

It depends heavily on integration complexity. A focused deployment can go live in 10–12 weeks. Full omnichannel rollouts with legacy system integration typically run four to nine months. Rushed timelines are the primary cause of poor post-launch performance, not platform limitations.

RAG architecture, confidence thresholds, and human review loops are the main controls. Training on high-quality, curated knowledge bases matters more than raw model size. Ongoing response auditing, especially for edge cases, is what separates reliable deployments from problematic ones.

Financial services, healthcare, ecommerce, and telecommunications see the strongest ROI. This includes high inquiry volume, repetitive query types, and clear escalation paths. That said, internal enterprise use cases like IT helpdesk and HR self-service often deliver the fastest payback period, and are frequently underestimated.

Focus on self-service resolution rate, escalation rate, average handling time, post-interaction CSAT, and fallback frequency. Deflection rate alone is a vanity metric: a bot that deflects but doesn't resolve just creates frustrated users who escalate anyway.